Challenging, but rewarding

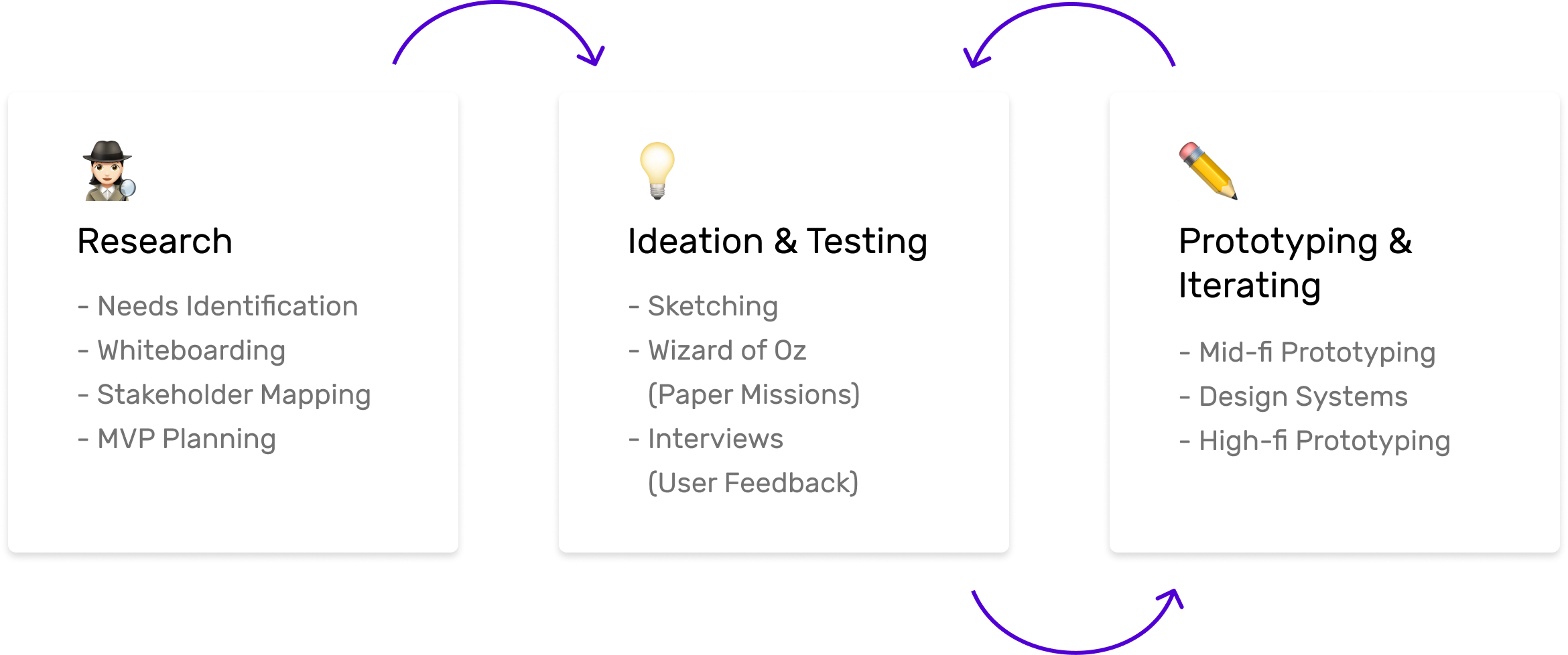

This project had a really tough onboarding process because of the large amount of information I had to consume. That’s why I found the user feedback sessions of paper missions to be super helpful in finding the right people to speak to about the features we were designing.

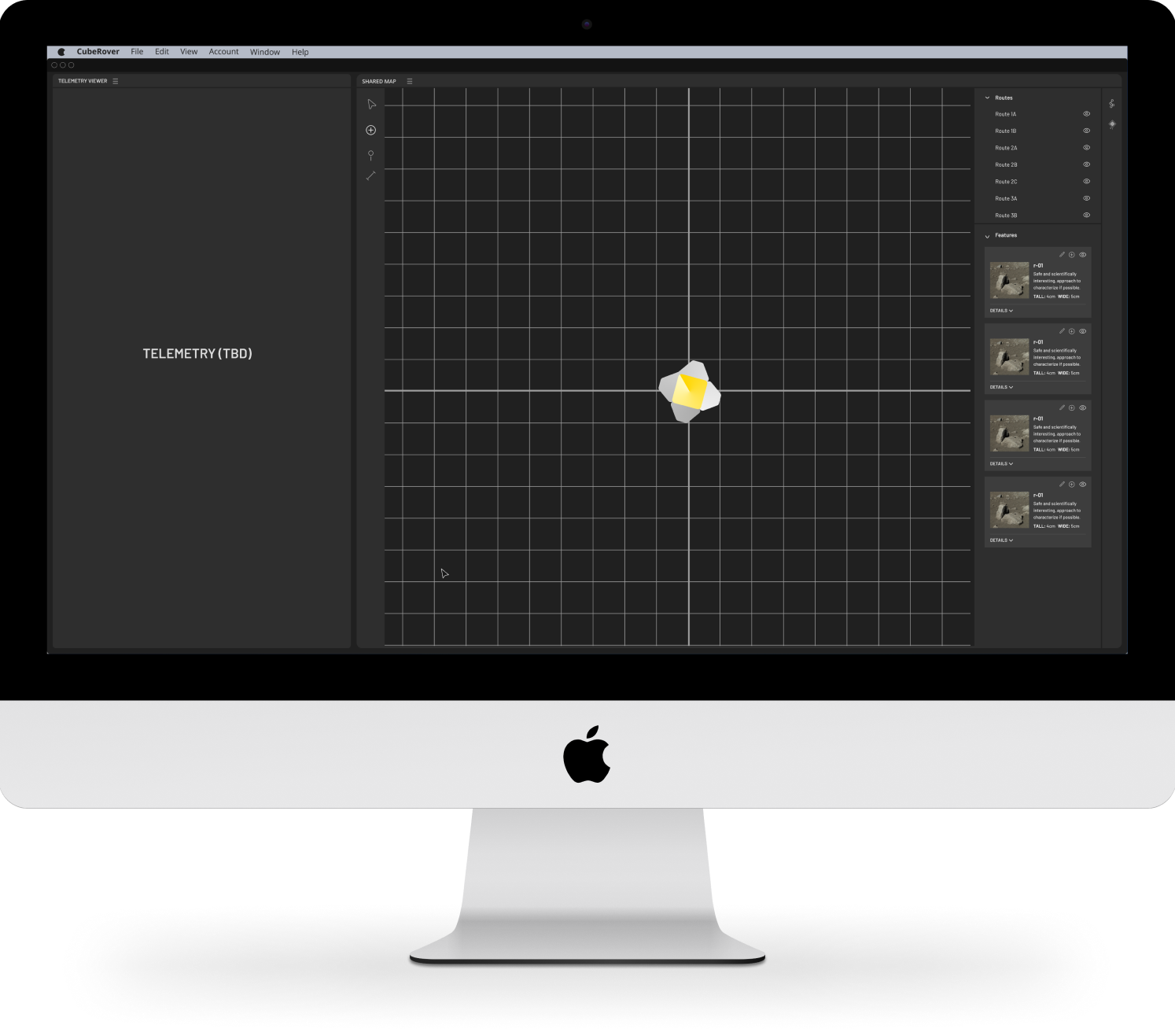

Overall, I’m so grateful I was able to contribute to CubeRover and can't wait to see when it launches. In the future, I’m hoping to work on more out of the box projects like this!